Information, Language, and Computation

Welcome

Hello • Manahuu • Olá • Hallo • こんにちは • Sawubona • 안녕하세요 • नमस्ते • Bonjour • مرحبًا • Merhaba • Aloha • Cześć • Mabuhay • سلام • Aaniin • Привет • ᎣᏏᏲ • 你好 • Dia duit!

We humans are intelligent, sentient, conscious, embodied beings. We can know, learn, and communicate. Machines are some of those things and can do some of those things. They can’t live a human life, though! But perhaps there are some things about the human experience that machines can give us insight into.

Three things.

- Information is that which informs. Or more precisely, the resolution of uncertainty. Information can be stored, transmitted, and processed.

- We use language to express information.

- Computation is the mechanical generation of new information from old information, where mechanical means in a sequence of steps that each take a finite amount of time and resources.

Computations are expressed in language and are carried out by computing devices that we call machines or automata. Computing devices can be physical or abstract. The act of specifying a computation and handing it off to a machine for execution is called automation. Wikipedia defines computer science as the study of computation, information, and automation.

Computer Science is the study of computation, information, and automation.

With automation, computation can speed up information processing to such an extent that many of our human reasoning capabilities are greatly amplified, augmented, and accelerated. Computation has greatly impacted the human experience.

Understanding information, language, and computation is empowering. Without a good understanding of these topics, one runs the risk of losing agency and being controlled by those who do.

We kind of know, intuitively, what these three words signify, but to really understand them, we need to both study their histories and develop theories—organized bodies of knowledge with explanatory and predictive powers. The great minds that have come before us have gotten us started. Let’s take a brief look at these important topics.

Theories are technicalInformation, language, and computation theories use a fair amount of logical and mathematical notation. If you need to brush up, see these logic notes and these math notes.

Information

Let’s start with information.

First question: how do we represent (encode) information?

Here’s a first shot. A symbol is a primitive unit of information, and all information, we’ll postulate, can be represented with strings of symbols. Examples:

91332.3e-81

⚠️ Stay BEHIND the yellow line! ⚠️

<ul><li>你好</li><li>ᎣᏏᏲ</li><li>ᐊᐃᓐᖓᐃ</li></ul>

/Pecs/Los Angeles/Berlin/Madrid//1 2/3 0/2 2/2 0/3 2///

(* (+ 88 3) (- 9 57))

int average(int x, int y) {return (x + y) / 2;}

{"type": "dog", "id": "30025", "name": "Lisichka", "age": 13}

∀α β. α⊥β ⇔ (α•β = 0)

1. f3 e5 2. g4 ♛h4++

Now, where do the symbols come from?

The answer is you get to make them up.

We are going to explore Unicode. As we do, notice how each of the characters have a name. The name seems to suggest a meaning, but does it really? Do symbols have an inherent meaning? Or is it acquired somehow? If acquired, how so?

There is an established discipline called Information Theory that we won’t cover in depth here. You can take full-length college courses on this topic. It is huge! The genesis of the theory is attributed to Claude Shannon whose 1948 paper A Mathematical Theory of Communication is legendary—perhaps one of the most influential scientific papers of all time. Among the many contributions of the paper, Shannon:

- Identified the fundamental problem of communication, namely “that of reproducing at one point either exactly or approximately a message selected at another point.”

- Defined the participants in a communication system: messages are produced by an information source, and transmitted as (encoded) signals over a possibly noisy channel, and received (decoded) for a destination.

- Showed that statistics was an inherent part of communication: “...significant aspect is that the actual message is one selected from a set of possible messages.”

- Introduced the concept of entropy as a measure of uncertainty in a message. (Low entropy means less uncertainty and therefore requires fewer bits to describe; high entropy means more uncertainty and therefore requires more bits to describe.) Entropy is incredibly important in communication, cybersecurity, data compression, machine learning, and many other fields.

- Noted that logarithmic functions are good (perhaps the best) measures of information: a device with two stable states can hold onebit of information; a device with four stable states can hold two bits of information; a device with 10 stable states can hold one decimal digit of information, and so on. (For a readable explanation of why logs work so well, see this primer.)

You probably have a sense of why data compression is important. The world has generated a lot of stored and transmitted electronic information. How much? If interested, start at Wikipedia’s article on the Zettabyte Era.

Language

There are many ways to describe what we mean by the word language. Here’s just one of many: In a TPWKY podcast appearance, Steven Mithen, author of The Language Puzzle, describes language as a system of communication comprised of discrete units with shared, often arbitrary meanings, combined using specific rules to generate and interpret complex thoughts.

How can we study language?

Our approach in this course will move deliberately from abstract to embodied then back again. We’ll look at language three ways, in this order:

- Language as Mathematics. We are going to study what language and computation can be, in principle, given unlimited time, unlimited memory, and perfect rules. This is a world of complete precision, suitable for constructed, toy, domain-specific, and programming languages.

- Language as Biology, Archaeology, and Culture. We are going to study what human language actually is, given six million years of evolution, a social primate brain, and no instruction manual. This is a world of contingency and improvisation.

- Language as Statistics and Engineering. We are going to study what language looks like to a system that has read almost everything humans have ever written, with no body, no history, and no communicative need. This is a world of pattern without experience. We will learn what that can and cannot tell us about the other two.

We’ll be using the following domains of study for the three perspectives:

Formal Language Theory

Language is formal, mathematical, and precise. Languages are sets of strings generated by rules.

Math

“Complete Precision”

Human

Language &

Linguistics

Language has evolved under biological, historical, and social forces.

Culture

“Contingency and Improvisation”

Large Language Models

Language is patterns emerging from neural networks using attention and transformers.

Engineering

“Pattern without Experience”

Exploring these three perspectives of language in this order creates a pleasing arc. We begin with the mathematical view of language as pure structure. We then study the biological, cultural, and embodied aspects through evolutionary and anthropological linguistics, seeing language as something that grew, messily, over millions of years. We return to the quantitative realm viewing language as patterns that emerge from data and training.

No single view is complete.The goal of the course is to leave you suspicious of any account of language—or of mind, or of thought, or of communication—that speaks from only one of these perspectives. Along the way we will encounter theories that were once widely accepted but are now considered outdated or incomplete.

Computation

If language is the expression of information, then computation is its processing.

The human understanding of, and application of, computing has evolved over millennia. It has arguably enhanced human capabilities. There is a history here that is empowering to know. History is an essential component of the study of any discipline. However:

[Computing is] complete pop culture. The big problem ... [is that] the electronic media we have is so much better suited for transmitting pop-culture content than it is for high-culture content. I consider jazz to be a developed part of high culture. [Jazz aficionados take deep pleasure in knowing the history of jazz, but] pop culture holds a disdain for history. Pop culture is all about identity and feeling like you're participating. It has nothing to do with cooperation, the past, or the future—it's living in the present. I think the same is true of most people who write code for money. They have no idea where [their culture came from]. —Alan Kay

Woah. He’s right. Let’s do something about this.

A Brief History of Computation

Here are some important events, people, works, and machines to be aware of in order to have a proper context for the study of computation.

In Ancient Times

Since antiquity, humans have been marking, matching, comparing, tallying, measuring, and reckoning.

A Big Step

Early inventories featured pictorial markings like 🌽🌽🌽🌽🌽 🍓🍓🍓. Then someone figured out they could save space by writing something like 5🌽 3🍓. An incredibly powerful idea: symbols representing quantities!

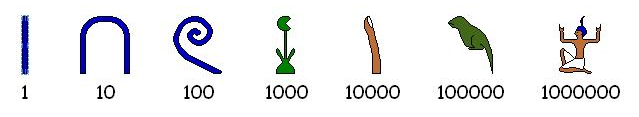

Numerals!

Early numeral systems like the Egyptian system were additive, but in the modern era, positional systems have become universal.

Recipes for Calculation

Recipes, or lists of instructions, for manipulating quantities and measurements were sometimes recorded. Notable examples include:

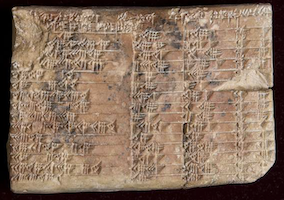

- Babylonian Tablets 1800-1600 BC

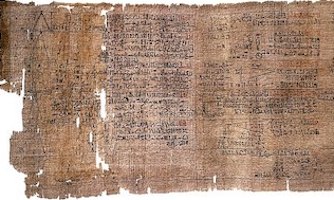

- Rhind Mathematical Papyrus (~1550 BC)

- Euclid’s greatest common divisor method (~300 BC)

- Eratosthenes method for enumerating primes (~240 BC)

- Muḥammad ibn Mūsā al-Khwārizmī’s Al-Jabr (813-833), which included systematic solutions for linear and quadratic equations

Calculating Machines

While humans could follow recipes and carry out reckoning with their fingers and toes, or use tally marks on bones, gains in efficiency and accuracy naturally arose with the creation of mechanical devices for calculation. Here are just a few examples:

- Abacus (earliest known from Sumeria 2700–2300 BC)

- Astrolabe (~200 BC)

- Antikythera mechanism (~200 BC)

- Slide Rule (1620s)

- Pascal’s calculators (1640s)

- Jacquard Loom (1804)

- Babbage’s Difference Engine (1820s) and Analytical Engine (1837)

- Hollerith’s Tabulating Machine (1880s)

The Analytical Engine was fascinating historically. Although never built, it was perhaps the world’s first programmable, general-purpose computer. Several programs are known to have been written for the device, the most famous of which—a program for the computation of Bernoulli numbers—was presented by Babbage’s collaborator Ada Lovelace in her translator’s notes to Luigi Menabrea’s article in Taylor’s Scientific Memoirs in 1843. (Also see this shorter summary). Lovelace is often considered the world’s first programmer. She is also widely admired as being perhaps the first to recognize the wide application of computing beyond arithmetic, writing that the Analytic Engine

might act upon other things besides number, were objects found whose mutual fundamental relations could be expressed by those of the abstract science of operations, and which should be also susceptible of adaptations to the action of the operating notation and mechanism of the engine.... Supposing, for instance, that the fundamental relations of pitched sounds in the science of harmony and of musical composition were susceptible of such expression and adaptations, the engine might compose elaborate and scientific pieces of music of any degree of complexity or extent.

Philosophers and Mathematicians Take Interest

The 19th century saw wild advances in formal logic and the foundations of mathematics. Logic broke free of Aristotelian syllogisms. Cantor introduced us to the paradise of multiple infinities. People started discovering paradoxes and started not only worry about how to put mathematics on a rigorous, consistent footing, but also to try to define what math even was. Three philosophies emerged:

Logicism

Math is just logic

Intuitionism

Math is only what we invent and can construct or demonstrate

Formalism

Math is done by manipulating symbols according to rules

Major works of this era include:

- Boole’s Boolean Algebra (1847)

- Frege’s Begriffsschrift (1879)

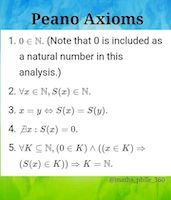

- Peano’s Axioms (1889)

- Hilbert’s Program (early 1920s)

- Russell and Whitehead’s Principia Mathematica (1910–1913)

People learned that formalizing mathematics, or even giving it a logic foundation, was not easy. Kurt Gödel, in 1931, actually proved that any attempt at providing a consistent, formal, axiomatic basis for mathematics (that included arithmetic with multiplication) could never capture all truths. A shocking result at the time! .

Computation Gets Formalized

Though Gödel showed there could be no logically consistent and complete formalization of all of mathematics, the question of whether there existed an effective, finite, decision procedure for all statements of mathematics remained open. (Yay, we’re back to computation!) This question is known as the Entscheidungsproblem. Hilbert thought such a procedure existed. Others did not. To prove him wrong, it is required to formalize the very notion of algorithm. The three main approaches of the 1930s were:

- Church’s Lambda Calculus

- The μ-recursive Functions (mostly worked out by Gödel, Kleene, and others)

- Turing Machines

Church and Turing both showed the Entscheidungsproblem had no solution. Poor Hilbert.

Story TimeWe‘ll go over the story of Church, Gödel, and Turing in class only. You can’t find everything on the notes!

Alan Turing

Turing’s paper is one of the most impactful and significant of all time. In this work:

- He gave a formalization of effective computation (algorithms) that people could actually understand—significant because previous models were extremely dense (Church’s) or reliant on advanced mathematics (Gödel’s).

- He gave evidence as to why his formalization, now called the Turing Machine, coincides with our intuitive notion of “computable” (the Church-Turing Thesis).

- He introduced computational universality: programs were just data (ok so far, not surprising) and, wait for it, a single machine could emulate, or “run,” any other machine ‼️🤯😮😳🤩. Before this work, it was not known (but it may, we don’t know, have been suspected by some) that a truly universal computer could exist—separate machines were thought to be necessary for different tasks.

- He showed that some numbers were not computable, some functions were not computable, and that some questions could not be mechanically decided—and hence, that the Entscheidungsproblem had no solution—a result shocking to many (but not all) mathematicians of the era.

Turing is revered as the founder of computer science because of this work. The ideas that there are limits to computation, equivalent ways to express computation, and the existence of a truly universal computing machine make computer science a discipline. For now, watch this video, ignoring the misleading click-baity title (incompleteness is a feature, not a flaw):

In case your only previous exposure to Turing was the movie The Imitation Game, remember that movies aren’t history, and change events for dramatic effect. Here’s a brief video pointing out a few of the inaccuracies of the movie, some rather insulting to Turing himself, and to his many collaborators:

Electronic Computing Machines

Turing's Universal Machine (1936) opened up a flood of new work in general purpose machines. Wikipedia’s History of Computing Hardware page covers both ancient devices and a fair number of machines from the 1930s–1960s. It is worth a look to see how we eventually got to the electronic devices that are ubiquitous today.

Programming Languages

von Neumann once angrily asked “Why would you ever want more than machine language?” Today that question sounds silly. Even assembly language (co-invented by Kathleen Booth) is too low-level. One of the biggest proponents of high-level languages was Grace Hopper, who also happened to write the first compiler. Here are some notable high-level languages:

- Fortran, COBOL, LISP

- Algol, PL/1, Simula

- APL, J, K, Q

- CLU, Alphard, Euclid, Mesa, Cedar, Turing, Ada

- BCPL, B, C, C++, D, Rust, Zig, Odin, Swift

- Java, C#, Scala, Kotlin

- Perl, PHP, Hack, Tcl, Python, Ruby, Groovy, Lua

- Simula, Smalltalk, Object Pascal, Eiffel

- Concurrent Pascal, Occam, Linda, SR, Erlang, Elixir, Parasail, Chapel, Go

- Self, JavaScript, ActionScript, CoffeeScript, TypeScript

- Standard ML, CAML, Ocaml, F#, Elm, Reason

- Miranda, Haskell, PureScript, Idris

- Prolog, Mercury, Oz, Curry

- Agda, Coq, Lean

- Snobol, Icon

- Id, Val, Sisal

- SQL, Cypher, SPARQL

- Postscript

- MATLAB, R, Julia, Mojo

- Intercal, Unlambda, Brainfuck, Malbolge, Befunge, ZT, Java2K, LOLCODE

- GolfScript, Pyth, CJam, Jelly, 05AB1E, Stax

Language Implementation

Languages have to not only be expressive, but they have to be efficiently implementable. This is why we study compilers and interpreters. Two major parts of this field are:

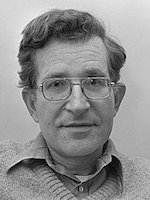

- Formal Language Theories (from Chomsky and others) that enable parsing to be efficient

- Optimizations (Frances Allen was a major figure in this area)

Human-Centric Computing

People create programs for people. Areas with people-focus include:

- Graphics and user interfaces

- Computing in education

- Libraries for creative computing

Modern Trends

Trends in the modern computing era:

- Personal computers (1970s)

- Internet (1980s)

- Web (1990s)

- Mobile (2000s)

- Cloud (2010s)

- Generative AI (2020s)

- Quantum Computing (?)

Other Histories

Computing history is so much richer than the brief outline above. The following are highly recommended:

- Gabriel Robins’ remarkable A Brief History of Computing

- Wikipedia’s Computing History article

- Wikipedia’s Computing Timeline article

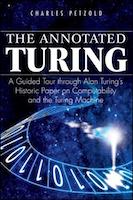

- Charles Petzold’s fabulous book The Annotated Turing

You can also take a few minutes to watch Jeff’s historical overview:

Beyond History

Computing is so much more than its technical core, its theoretical concepts, or even its history. Computing is a human activity. Computations, programming languages, and machines, are designed by humans for humans.

Grady Booch is building a remarkable transmedia documentary on the intersection of computing and the human experience.

The web version introduces itself as:

The story of computing is the story of humanity: this is a story of ambition, invention, creativity, vision, avarice, power, and serendipity, powered by a refusal to accept the limits of our bodies and our minds. Computing: The Human Experience is a transmedia project that explores the science of computing, examines the connections among computing, individuals, society, and nations, and by considering the history of computing contemplates the forces that will shape its future.

The story of computing is “powered by a refusal to accept the limits of our bodies and our minds”

It is worth immersing yourself in this content for hours.

Major Themes

During humans’ creation of a science, art, and craft of computing, several major themes have emerged. They form the core of the field that we now call computer science. Among these themes, or big ideas, are:

- Symbols and symbol manipulation allow us to compute abstract families of problems, which greatly amplifies our thought processes.

- Theoretical computing devices (such as Turing Machines) simplify our inquiry into the nature of computation, enabling deep understanding of the concepts and providing a foundation for further innovation.

- Universal computation is far, far from obvious, but we take it for granted today.

- Modern computers speed up time to such an extent that what we know and what we can know is qualitatively different than what we could know before computing.

- Programming languages enable the expression of computation at such a high level that creative and impactful computing become accessible to the masses.

Theories and Practices

In studying any discipline, humans create theories. A theory is an organized body of knowledge with explanatory and predictive powers. There are four major theories of computation:

- Language Theory, dealing with how computations are expressed

- Automata Theory, dealing with how computations are carried out

- Computability Theory, dealing with what is and is not computable

- Complexity Theory, dealing with how hard (in terms of resources like time and space) computations are

Theories are important! Without them, understanding is fragmented, superficial, and lacks a vocabulary for effective communication. Theories are essential for both absorbing and creating new knowledge.

The practice of computing involves gaining proficiency in crafting computations (programs) in dozens of different programming languages and in many different programming styles, applying concepts, patterns, and idioms. It also includes the design and implementation of new programming languages and even new machines.

What is the best kind of practice to make the theory come alive, and build a deeper understanding of computation?

Answer: Designing one’s own language and implementing compilers and interpreters for it.

The Deeper Philosophical Context

There is, in the universe—or at least in our conception of it—a kind of fault line between the denotational (what exists?) and the operational (how do we know or produce something?). Rather than approaching the topic through analytic philosophy, here is a rough table of complementary themes. Some of these will be debatable and perhaps slightly inaccurate, and maybe there’s some overlap and nuance, but hopefully you can tell what we’re aiming for.

| Denotational | Operational |

|---|---|

| What is it? | How does it work? |

| Being | Becoming |

| Truth | Proof |

| Mathematics | Computer Science |

| Declarative | Imperative |

| Statements | Commands |

| Existence | Construction |

| Models | Inference Rules |

| Classical Logic | Intuitionistic Logic |

| Dogma | Evidence |

| Faith | Reason |

| Platonism | Constructivism |

| Logos | Praxis |

| State | Transition |

| Objects (Democritus) | Processes (Heraclitus) |

| Substance | Function |

| Statics | Dynamics |

| Structure | Behavior |

| Data | Algorithm |

| Specification | Execution |

| Meaning | Evaluation |

| Constraints | Search |

| Functions as Sets | Functions as λ-calculus expressions |

| Languages as Sets | Grammars and Recognizers |

| Ontological | Phenomenological |

The study of computation forces us to confront these complementary themes and to understand how they interact with, oppose, and complement each other.

Recall Practice

Here are some questions useful for your spaced repetition learning. Many of the answers are not found on this page. Some will have popped up in lecture. Others will require you to do your own research.

- What does sawubona mean? It is a Zulu greeting that means "I see you"—a bit more that just a simple hello, as it signifies respect for, validation of, and the presence of the (whole) person you are acknowledging.

- What can’t intelligent machines do? Intelligent machines cannot live a human life.

- What is information? Information is that which informs. Information is the resolution of uncertainty.

- Who is credited with launching Information Theory? Claude Shannon

- What kind of measures are used for information? Logarithmic ones.

- What is a symbol? A primitive unit of information.

- What is Unicode? Unicode is a standardized system for encoding, representing, and handling text expressed in most of the world's writing systems.

- What are three ways of looking at language? (1) As mathematics, (2) as biology, archaeology, and culture, and (3) as statistics and engineering.

- What is computation? The formal, mechanical, generation of new information from old information.

- What is a programming language? A language for expressing computations.

- What is an automaton? A machine for carrying out computations.

- According to Alan Kay, why is computing history not widely known? Because pop culture, which computing arguably is, holds a disdain for history.

- What is the significance of numerals? They allow large quantities to be expressed with fewer symbols.

- Show how to represent the quantity twenty-one thousand two hundred thirty-seven using Egyptian Numerals:

- What are some ancient media on which early recipes for calculation were recorded? Babylonian Tablets and the Rhind Mathematical Papyrus.

- What are some early famous nontrivial algorithms? Euclid’s greatest common divisor method and Eratosthenes method for enumerating primes

- From whose name do we get the word “algorithm” from? Muḥammad ibn Mūsā al-Khwārizmī

- What were some of the accomplishments of Al-Khwārizmī? He wrote Al-Jabr, which included systematic solutions for linear and quadratic equations.

- Where and when were abaci first known to be used? Sumeria 2700–2300 BC.

- What could the Antikythera mechanism do? It was an analog computer that could predict astronomical positions and eclipses.

- Babbage is known for designing to machines. Which ones? The Difference Engine and the Analytical Engine.

- Was the analytical engine ever built? No.

- What was Ada Lovelace’s published program in her famous Notes? A program for the computation of Bernoulli numbers.

- What was Lovelace most known for besides the first published program? She was arguably the first to recognize and to write about the wide application of computing beyond arithmetic.

- What are the three major philosophies about what math is? Logicism, Formalism, and Intuitionism.

- Who is the guy most associated with the formalist movement in mathematics, so much so that he felt formal systems could capture all of mathematics through proof and computation? David Hilbert.

- What was Hilbert’s Program? A call to people of his time to formalize all of mathematics.

- What did George Boole introduce to the world? Boolean Algebra.

- What did Hilbert call Cantor’s beautiful work on infinities? “Cantor’s paradise.”

- What did the logicists think math was? What did the formalists think it was? The intuitionists? Logicists: a branch of logic. Formalists: a game of manipulating symbols according to rules. Intuitionists: an invention for constructing objects and facts about them.

- What was the Entscheidungsproblem? Hilbert’s question of whether there was an effective, finite, decision procedure for all statements of mathematics.

- What were the three major attempts at formalizing computation in the 1930s?

- Alonzo Church’s Lambda Calculus

- Kurt Gödel’s Mu-Recursive Functions

- Alan Turing’s Turing Machines

- How did Church formalize the notion of effective computability? With the Lambda Calculus.

- How did Turing formalize the notion of effective computability? With the Turing Machine.

- What was Gödel's initial reaction to Church’s Lambda Calculus, and what did this reaction motivate Gödel to do? He was skeptical that the Lambda Calculus was a model for effective computability (because it was so simple), so he created his own model, the μ-recursive functions.

- After Church demonstrated the equivalence of the Lambda Calculus and the μ-recursive functions, what was Gödel’s reaction? He thought his own model, the μ-recursive functions, must be insufficient.

- When did Gödel finally accept the Lambda Calculus a model for effective computability? After Turing showed that the Lambda Calculus and Turing Machines were equivalent. All agreed that Turing machines were a sufficient model, thus the other two approaches must also be.

- Name four stunning achievements of Turing’s famous paper. Turing (1) gave a formalization of effective computation, (2) showed that his formalization coincided with our intuitive notion of “computable”, (3) introduced computational universality, and (4) showed that some numbers (and by extension some functions) were not computable.

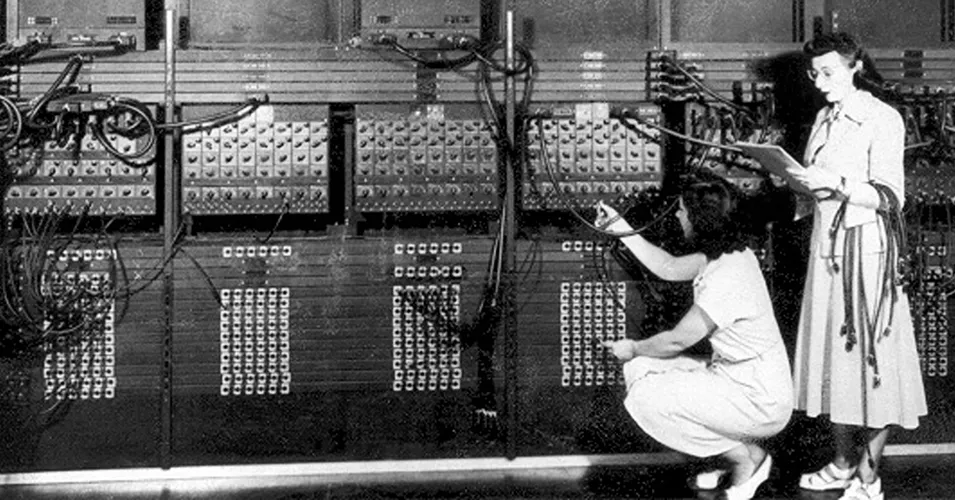

- Why was the ENIAC famous? It was the first general-purpose electronic computer.

- Who were the ENIAC six? Kay McNulty, Jean Bartik, Betty Holberton, Marlyn Meltzer, Francs Spence, and Ruth Ruth Teitelbaum.

- Who wrote the first compiler and what was it called? Grace Hopper. The A-0 System.

- Why are programming languages important? They enable the expression of computation at such a high level that creative and impactful computing become accessible to the masses.

- Why is LISP so loved? Simple syntax and semantics, homoiconicity, macros.

- Why is Ruby so loved? It is extremely expressive, allows for rapid development, and has excellent metaprogramming facilities.

- Why is CLU so significant? It introduced data abstraction, iterators, and exception handling.

- What else is Barbara Liskov known for besides designing CLU? She introduced the Liskov Substitution Principle.

- What was Noam Chomsky’s biggest contribution to computer science? He introduced the Chomsky Hierarchy, which served as a foundation for modern parsing theory.

- What is Frances Allen known for? She was a (if not the) major figure in the field of compiler optimization. It is said that among all the optimizations performed in modern compilers, she probably came up with or at least described 80% or more of the most important ones.

- What year did Allen win the Turing Award? 2006.

- What even is Generative AI? AI that can create new things, like images, music, code, or text.

- Where can I find more histories of computing? Gabriel Robins’ A Brief History of Computing, Wikipedia’s Computing History article, Wikipedia’s Computing Timeline article, and Charles Petzold’s The Annotated Turing.

- What can I find at Computing: the Human Experience? (You should visit and explore the site to answer) Histories, timelines, stories, articles, films, interactive exhibits, and educational resources on computing and its impact on humanity (considering individuals, cultures, nations, and societies).

- What are the four major theories of computation? Language Theory, Automata Theory, Computability Theory, and Complexity Theory.

- Learning about computing requires a studying languages and automata. Why do we study these two subjects? Languages are for expressing computations, and automata are for carrying out computations.

- What is meant by the denotational vs. the operational, in a philosophical sense? The denotational is concerned with what is (being, truth, existence, declaration, classical logic, dogma, faith, platonism), while the operational is concerned with how things work, or how to know or prove something (becoming, proof, construction, program execution, intuitionistic logic, evidence, reason, constructivism).

- Is computation denotational or operational? Operational.

- Why might math be considered denotational while computer science is considered operational? Mathematics often focuses on abstract truths and existence, whereas computer science emphasizes processes and constructions.

Summary

We’ve covered:

- Earliest notions of computing

- Numbers as abstractions of quantities

- Numerals as representations of numbers

- Earliest known recordings of recipes for calculations

- Early computing machines

- Influence of the analytical engine

- Ada Lovelace's insights

- Math in the late 1800’s

- The formalization of computing

- Undecidability

- Human-centric computing

- The modern era in computing

- Several big ideas of computing

- Computing Theories

- The Denotational and the Operational