Threads

Definitions

Here are the real, most generic, least language-specific, definitions:

A program is the static source code of a computation.

In the context of an operating system, a process is a program in execution. The operating system allocates resources to a process—such as memory, file handles, and sockets—and manages its execution. A thread is a line of execution within a process. A process can have multiple threads, which share the same memory space and resources allocated to the process.

Programmers need to know the difference between processes and threads. They need to know that the operating system manages (including the scheduling of) the threads within a process. Each thread has its own handle within the O.S. These “real” threads are seen by application as kernel threads. An application may manage its own smaller, super lightweight threads, which are allocated by the language runtime (not the O.S.) onto the kernel threads. These lightweight threads are called user threads.

Language-specific termsIn Java, kernel and user threads are wrapped as platform and virtual threads, respectively.

In Erlang, the units of concurrent execution are called processes, even though they do not map to a single operating system process.

In Go, the term goroutine is used for a lightweight thread managed by the Go runtime.

In Python, the term greenlet is used for a lightweight thread managed by the Python runtime.

A task is a more general term that can refer to a unit of work that can be executed concurrently. A task can be a thread, a process, or even a function that is scheduled to run at some point in the future. It’s a pretty generic term for “something that runs concurrently with other things.” Some languages, notably Ada, have a keyword task, so it’s important to keep the theoretical and general definitions separate from that of a specific language.

Thread States

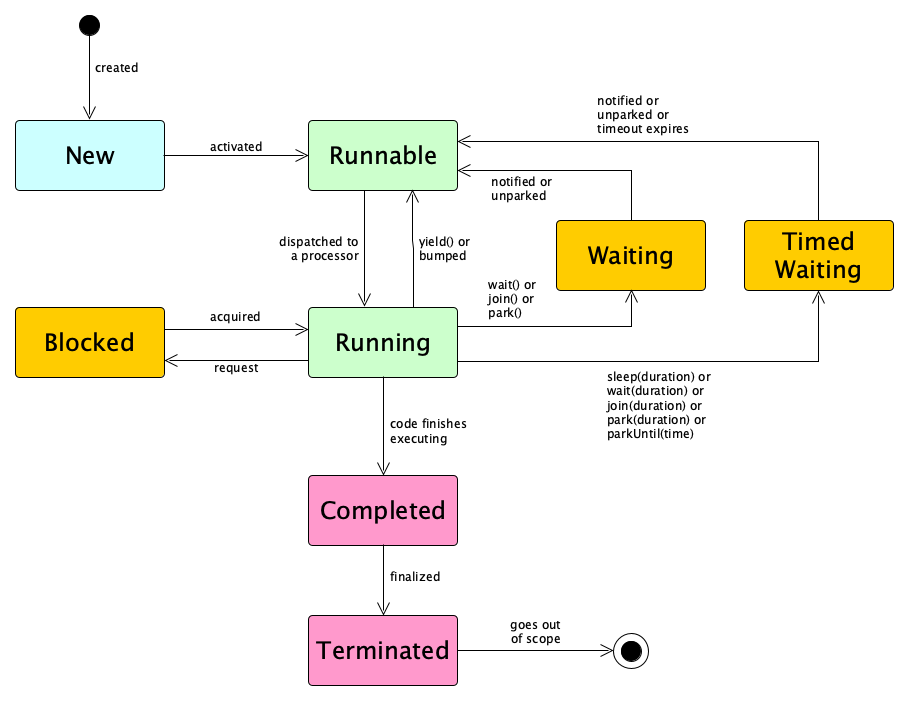

Threads go through various states during their lifecycle. A few of note:

- New: The thread is created but not yet started.

- Activating: It just started but is still getting initialized before it can run.

- Runnable: The thread is ready to run and waiting for CPU time.

- Running: The thread is currently executing.

- Blocked: The thread is waiting for a resource or lock to become available.

- Waiting: The thread is waiting indefinitely for a notification.

- Timed Waiting: The thread is waiting for a specified period of time.

- Completed: The thread ”code” is done.

- Finalizing: The thread is completed but it’s still doing some cleanup before it can terminate.

- Terminated: The thread has fully completed its execution.

Some thread models have only very few states. Some may have all of these. Some may even have more. Things are so different from language to language, and from environment to environment. Some of the fundamental ideas are common across environments, though. Here’s a simple sketch, not tied to any particular programming language:

Thread Synchronization

When multiple threads are running concurrently, they often need to notify each other about...well anything, really (such as the thread started, finished, is waiting, will be sleeping, has a problem or that the other thread needs to wait, continue, or stop), and often need to coordinate their actions to avoid conflicts, which is a nice way of having the threads say “hey let’s not completely step all over each other destroying the integrity of shared mutual data”.

Communication and coordination are both aspects of a broader topic known as synchronization.

The communication part can be done by direct message passing, notifying a global scheduler, or using various synchronization objects. Or the runtime system itself may be able to detect what’s going on and do the communication itself.

The coordination part is generally applied to the case in which shared mutable resources are visible to multiple threads.

There are probably three major types of synchronization (or at least three major categories):

Barrier Synchroni-zation

Barriers, latches, exchangers, and phasers are used to synchronize multiple threads at specific points in their execution, ensuring that they reach a certain point before continuing.

Resource Synchroni-zation

Locks, mutexes, and semaphores are used to control access to shared resources, ensuring that only one thread can access a resource at a time.

Condition Synchroni-zation

Condition variables allow threads to wait for certain conditions to be met (such as a queue being not full or not empty) before proceeding.

Barriers

Barrier synchronization is about threads waiting to get to certain points in their execution before proceeding. Here are few types of barriers:

CyclicBarrier

A cyclic barrier allows a fixed number of threads to wait for each other at a barrier point. It is cyclic because it can be reused once the threads are released, unlike a countdown latch. When the specified number of threads reach the barrier, they are all released to continue execution. This is useful for updating shared state or performing actions when all threads reach a common point.

CountDownLatch

A countdown latch allows one or more threads to wait until a certain number of operations in other threads are completed. It is initialized with a count, and threads decrement the count when they complete a task. Threads waiting on the latch are released when the count reaches zero.

Phaser

A phaserprovides synchronization points (phases) and can dynamically register and deregister threads. It is useful when the number of participating threads may vary during execution.

Shared Resources

Shared mutable resources open the potential for race conditions. Race conditions can be benign or harmful. Preventing harmful race conditions can be handled in one of four ways.

The first two simply make the problem go away! You can remove the threads or you can remove the shared data:

Single-Threaded Concurrency

Having only a single thread makes it easier to avoid contention. The code is still conceptually concurrent, of course.

Message Passing Only

Remove the shared state completely. All threads have local storage only and communicate strictly through message passing.

The next two accept that you have threads and shared resources, but adopt techniques to prevent the race conditions from destroying integrity:

Mutual Exclusion

Accept that shared mutable state exists, and use locking mechanisms to control access to it.

Software Transactional Memory

Optimistically allow threads to do their thing, but check for conflicts before actually committing.

We will cover single-threaded concurrency in separate notes on coroutines and asynchronous programming.

We will cover message passing in separate notes.

We will cover mutual exclusion in separate notes. These cover strategies such as explicit locking; structured (implicit-ish) critical sections; devices such as semaphores; atomic operations; and thread-safe data structures. Because condition synchronization is so closely related to mutual exclusion, we will also cover condition variables in those notes.

You can read about Software Transactional Memory at Wikipedia.

Thread Local Storage

Although there’s a lot of knowledge surrounding mechanisms for mutual exclusion to protect shared mutable state, sometimes you actually need to be careful and note things that should not be shared. Sometimes every thread needs it own copy of an entity. That is, we sometimes need thread local storage. Common use cases are:

- User and session tracking in web apps, for things like user data, authentication tokens, or session IDs.

- Database connections, where each thread manages its own connection without complex connection pooling.

- Transaction information

- Anything too clunky to pass around as parameters, such as logging configurations, date formatters, or other expensive objects.

Recall Practice

Here are some questions useful for your spaced repetition learning. Many of the answers are not found on this page. Some will have popped up in lecture. Others will require you to do your own research.

- What is a program? A program is the static source code of a computation.

- What is a process, in the context of an operating system? A process is a program in execution, managed by the operating system.

- What is a thread, in the context of an operating system? A thread is a line of execution within a process, sharing the same memory space and resources with other threads in the process.

- What is a task? A task is a unit of work that can be executed concurrently. It can be a thread, process, or function.

- What is the difference between kernel threads and user threads? Kernel threads are managed by the operating system, while user threads are managed by the language runtime.

- How does Erlang use the term “process”? In Erlang, the term "process" refers to a unit of concurrent execution, which does not map directly to a single operating system process.

- What terms are used by Java, Python, and Go for the extremely lightweight user threads? Java uses "virtual threads", Python uses "greenlets", and Go uses "goroutines".

- What are some thread states? New, Runnable, Running, Blocked, Waiting, Timed Waiting, Completed, Finalizing, and Terminated.

- What is the difference between a Waiting state and a Timed Waiting state? A Waiting state is indefinite, while a Timed Waiting state has a specified duration.

- What is synchronization in the context of threads? Synchronization is the coordination and communication between threads to avoid conflicts and ensure correct execution.

- What are three types of synchronization? Barrier synchronization, resource synchronization, and condition synchronization.

- What are four devices used in barrier synchronization? Cyclic barriers, countdown latches, phasers, and exchangers.

- What is a cyclic barrier? A cyclic barrier allows a fixed number of threads to wait for each other at a barrier point, and can be reused once the threads are released.

- What is a countdown latch? A countdown latch allows one or more threads to wait until a certain number of operations in other threads are completed, releasing waiting threads when the count reaches zero.

- What are four ways to deal with harmful race conditions? Single-threaded concurrency, message passing only, mutual exclusion, and software transactional memory.

- How does single-threaded concurrency address the problem of contention for shared resources? Single-threaded concurrency avoids contention by having only a single thread, making it easier to manage shared resources.

- How does message passing (only) address the problem of contention for shared resources? Message passing only avoids shared mutable state by ensuring all threads communicate through messages, eliminating the need for shared resources.

- What is thread local storage? Thread local storage is a mechanism that allows each thread to have its own copy of certain data.

- What are some common use cases for thread local storage? User and session tracking, database connections, transaction information, and expensive objects like logging configurations.

- What is the most well-known downside of thread locals? Memory leaks are likely without careful management.

Summary

We’ve covered:

- Programs, Processes, Threads, and Tasks

- Thread States

- The problem of mutable, shared state

- Three approaches to avoid race conditions

- Thread Local Storage