An Introduction to Human Language

Getting Started

There are at least three ways to look at language:

- Mathematical view (Formal language theory): Languages are sets of strings over an alphabet.

- Evolutionary view (Human Language): Language is a product of evolution, shaped by natural selection and cultural transmission.

- Probabilistic view (Language models): Language is a system of probabilities and patterns, often modeled statistically and generated via neural networks.

We studied formal languages earlier in the course. Now let’s learn about human language.

Many people have looked into the question of what language is, where it came from, why we have it, what we can do with it, and what language might tell us about ourselves. Researchers have come from archaeology, anthropology, psychology, linguistics, computer science, philosophy, cognitive science, genetics, ethology, neuroscience, and many other fields.

The notes you are reading now are based on Steven Mithen’s 2024 book The Language Puzzle. You should read this book. It’s really good. It looks at language as a puzzle with many fragments:

- The frame of the puzzle is made from:

- Studying the last 6 million years of human history (the time of the LCA of humans and chimpanzees)

- The nature of language itself (words, rules, etc. and the basis for linguistic diversity)

- The interior fragments are assembled from:

- Studying the calls and vocalizations of monkeys and apes as a foundation

- Fossil evidence for the development of the vocal tract and the size and shape of the brain

- Archaeological evidence for symbolic behavior (art, ornaments, etc.) and the use of fire and tools

- Understanding words themselves, iconic words and arbitrary words, and changes in word meanings, pronunciations, and roles

- How infants acquire language, and how language is transmitted between generations

- How language impacts thought, such as how the use of metaphor shapes complex abstract concepts

- Whether and how much of a genetic predisposition exists for language acquisition

Mithen identifies and assembles these fragments to form a compelling and modern understanding of language evolution.

The field of linguistics evolvesPrevious highly influential theories have been revised, shied away from, or replaced as new studies have found the old ones lacking.

- Charles Hockett in the 1960s identified “16 design features” of language—9 we share with other primates (vocal-auditory channel, broadcast transmission, transitoriness, interchangeability, total feedback, specialization, semanticity, arbitrariness, discreteness, displacement), and 7 that are uniquely human (productivity, cultural transmission, duality of patterning, prevarication, reflexiveness, learnability). But recent research has found these features to be a “non-starter” as a framework for evolutionary linguistics.

- Noam Chomsky from the 1960s onward argued for Universal Grammar (UG)—an innate Language Acquisition Device hardwired into the human brain, with a common deep structure underlying all human languages. The poverty of the stimulus argument held that children could not possibly learn grammar from input alone. But cross-linguistic typology has since revealed far more structural variation across languages than UG predicted, modern approaches such as computationally-modeled Iterated Learning Model (ILM) have shown that general learning mechanisms may be sufficient.

- Steven Pinker’s The Language Instinct (1994) argued that language is a Darwinian biological instinct shaped by natural selection specifically for communication, much like echolocation in bats. Critics find some aspects of this view outdated as (1) it is sympathetic to UG, (2) the use of the term “instinct” does not account for social interaction and cultural immersion, and (3) language evolves faster than a genetic explanation would seem to allow.

Language plays a huge role in computer science, cognitive science, and artificial intelligence. A good understanding of human language is crucial for developing natural language processing systems and effective language models.

It’s also fun to contrast the precision of formal languages and programming languages with the messiness, ambiguities, redundancies, and exceptions of evolved human languages. Maybe that’s why LLMs kind of work—all this stuff is rolled into probabilities and patterns in a network not unlike the human brain (with its long-distance connections and distributed processing).

Language Evolution

We clearly use language differently than our nearest relatives (the chimpanzees) who communicate with gestures, facial expressions, and vocalizations, and can learn ASL signs but can’t seem to organize all the signs recursively. Our LCA probably was very much like todays chimpanzees.

The language we have today evolved with us. A few developments from Mithen’s assembly from The Language Puzzle are:

- The LCA (6 mya) and other forest-dwelling primates had small brains and rough vocal tracts to make holistic calls, which they could do little more than make faster or slower, or louder or softer.

- Global climate became more arid 4 mya so hominins had to adapt to (predator-rich) grassland environments, where living in larger groups and learning signs (for communication and alliance building) helped. Genetic mutations led to more cognitive skills, statistical learning, tool usage, stone throwing, bipedalism to cover larger distances, and larger brains.

- The larger brains, smaller teeth, and flatter faces led to more efficient vocal tracts that could produce significantly many more sounds. Calls need no longer be holistic: they could be made up of smaller meaningful units which became vowels and consonants, and iconic words arose.

- Larger brains + bipedalism led to babies being born earlier: childhood provided the cultural transmission bottleneck to allow syntax to emerge.

- 1.5 mya Broca’s area appeared, which is shared neural real estate for language and manual dexterity (such as tool making and use). Mirror neurons appeared which fire both when you act and when you observe action.

- As humans spread though different environments, they encountered never before seen animals, plants, topographies, hazards, and weather conditions that needed describing. At first, all the new words were iconic but generalizations ultimately formed.

- Arbitrary words often arose due to repeated mishearings, mispronunciations, misunderstandings, and social conventions. The drive toward efficiency mattered too, simplifying long words and making some easier to say.

- More evolutionary changes caused our species, Homo sapiens, to develop more complex cognitive fluidity: a larger cerebellum, more globular brain shape, smaller occipital lobes, and larger neural networks led to words for abstract concepts, different categories of words, and metaphor. Humans began story telling and even to imagine a supernatural realm of ghosts and spirits and demons, and gain the ability to persuade and mislead.

Utterances

Modern language is characterized by combining words into utterances, according to various rules.

Utterances can be spoken, signed, or written.

Utterances have meanings which are influenced not only by the words themselves but also by prosody—intonation, stress, rhythm, tempo, and pauses in spoken language, and by body posture, movements, pauses, and facial expressions in signed languages.

Come up with utterances (sentences or phrases) using different forms of prosody to convey meaning changes such as statements vs. questions, emphasis, sarcasm, irony, etc.

If utterances are made up of words, then what, exactly, are words?

Words

Exactly what counts as a word is kind of hard to pin down, and varies between languages. It’s typically understood to be a unit of meaning that can stand alone in an utterance. There’s a lot of variation among languages, especially in the orthographic representations—think of agglutinative languages like Finnish, Hungarian, Turkish, Japanese, Swahili, Korean, Quecha, and Tamil.

Tokenization in LLMsThis is exactly why tokenization is hard and why modified Byte-Pair Encoding (BPE) is a compromise, not a solution. When an LLM tokenizes your prompt, it is not operating on “words” in any linguistically meaningful sense.

Basic Terminology

Here are some linguistic terms useful in the study of spoken words, just to get started:

- Spoken Word: a unit of meaning that can stand alone in an utterance, made up of one or more phonemes

- Phoneme: a single speech sound

- Vowel: a phoneme produced without significant constriction of the vocal tract

- Consonant: a phoneme produced with some constriction of the vocal tract

- Syllable: a larger sound unit

- Phonology: the study of the functional patterns of speech sounds, specifically how phonemes create meaning

- Morpheme: a part of a word that contribute meaning

- Morphology: How words (tokens) are made up of smaller pieces

- Syntax: How words are structured to form phrases and sentences

- Semantics: What sentences mean

- Pragmatics: How language is used to communicate ideas and concepts

Lexical and Grammatical Words

Words are often divided into lexical and grammatical words:

| Lexical Words | Grammatical Words |

|---|---|

| Carry meaning | Function words, glue of syntax |

| Can stand alone | Cannot stand alone |

| Open class, meaning new members can be added freely—to google, selfie, ghosting | Closed class, new words pretty much never added, and they dominate in frequency analysis |

| Example categories in English: Nouns, verbs, adjectives | Example categories in English: Prepositions, conjunctions, pronouns, determiners |

| Gazillions of these | Very few of these |

To be honest, the boundary between these categories is not always clear-cut, for example, auxiliary verbs in English feel like they can be both.

Lexical words can range from the concrete (e.g., apple, fly, blue) to the abstract (e.g., freedom, oppression, justice).

Some words are iconic, meaning their form resembles their meaning (e.g., buzz, bang, hiss, plop), and others are arbitrary (e.g., dog, cat). Perhaps the separation here is fuzzy, too

Lexicons

The lexicon of a language is its inventory of words (and their meanings).

The average adult English speaker knows ~50,000–100,000 words (their passive vocabulary), but frequently uses ~20,000–30,000. An unlimited number can be made in theory which can be figured out.

/usr/share/dict/words. How many words are in that file? (Hint: use wc -l in the terminal.) Search the file (hint: use grep) for words you know but suspect might not be in the file.

Children acquire words at a remarkable pace, about 10 new words per day between ages 2–8, many of which are inferred from a single exposure in context.

Contrast with LLMs: trained on trillions of tokens but don’t seem to generalize as easy.

Morphemes

A word is made up of one or more morphemes, the smallest units of meaning.

They can be lexical (anchored to a concept) or grammatical (inflection, affix), and can be either free or bound.

English examples:

- Free lexical morpheme (root), e.g. dog, happy, walk

- Bound lexical morpheme, e.g. bio (as in biology), tele (as in telephone)

- Free grammatical morpheme, e.g. and, but, the, as, of

- Bound grammatical morpheme, e.g. -s (plural), -ed (past tense), -ing (progressive), -ment (noun-forming)

Phonemes

Phonemes are the smallest units of sound in a language that can distinguish meaning. Some spoken languages have only a few dozen phonemes, while others have over a hundred. The IPA (International Phonetic Alphabet) provides a standardized way to represent these sounds.

Let’s visit the the interactive IPA chart and practice pronouncing some of the phonemes.

A lot of languages are written with the Latin alphabet, even when the sounds don’t match the letters perfectly. Watch the following video to see if the Latin alphabet is a good fit for Tlingit.

Word Meanings

How do words get their meanings? It is a huge question. There are some theories, like Rosch’s Prototype Theory. The idea is that word categories aren’t defined by certain conditions, but rather organized around prototypes (or best examples), such as a robin for the category "bird" (and not a penguin). Other members of the category are included based on their similarity to the prototype, leading to graded membership rather than a strict boundary.

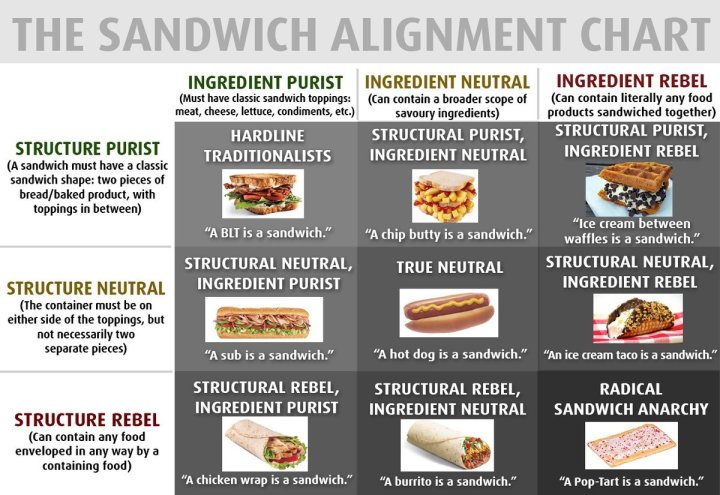

The important question is whether a hot dog, or even a pop tart, is a sandwich.

Prototype Theory in LLMsThis theory is compelling an used in machine learning and LLMs: classification at category boundaries is hard, embeddings do capture graded similarity!

You might also check out Putnam’s Twin Earth Thought Experiment, which gives evidence for meaning not being entirely in one’s head (intensionally) but also (extensionally) within one’s environmental context.

Another theory is the Causal Theory of Reference, which suggests that words get their meaning anchored historically, through chains of use.

Lexical Relations

Words are definitely related to each other! Learn these terms about these relationships to impress your friends:

- Synonymy

- Words with very similar meanings, close to being interchangeable but not necessarily. Examples:

- big / large

- happy / joyful

- begin / start / initiate

- watch / observe

- smart / intelligent

- Antonymy

- Words that have opposite meanings. Gradable antonyms are at opposite ends of a spectrum, such as:

- hot / cold

- big / small

- on / off

- alive / dead

- pass / fail

- buy / sell

- parent / child

- teacher / student

- Hyponymy

- Words with a subcategory relationship. Examples:

- dog is a hyponym of animal (the hypernym)

- rose / flower

- car / vehicle

- poodle / dog / canine / animal

- Polysemy

- When a word has many related meanings (can be metaphorical or metonymic or systematic). Think: one lexeme with multiple senses. Examples:

- foot (body part / mountain base)

- head (body part / leader / top of something)

- run (move fast on foot / operate a business)

- get (obtain / understand / become)

- paper (material / academic article / publication / newspaper company)

- Homonymy

- When words coincidentally look or sound the same, despite having completely different origins and distinct, unrelated meanings. Think: distinct lexemes sharing a surface form. Examples:

- bat (flying mammal / baseball equipment)

- bark (tree skin / dog sound)

- lie (recline / falsehood)

- right (correct / direction)

- bank (financial institution / river bank)

- stalk (plant stem / follow stealthily)

Word Vectors Encode These Relations

Embedding spaces capture many lexical relations geometrically. The famous example:king − man + woman ≈ queen. Synonymy, hyponymy, and analogy all emerge from distributional statistics — no one programmed them in.

king − man + woman ≈ queen). What does it mean that geometric relationships encode semantic ones?

Now that we covered words (barely), what, then, are the rules?

Grammar

Words and word-like entities are combined (arranged) according to rules to form larger units like phrases and sentences. The rules constitute a grammar. Where do the rules come from? How do we know if an utterance is well-formed?

Rules or Guidelines?In programming languages, rules are explicit and prescriptive. In spoken natural language, we tend to treat them as guidelines, but in writing, they are often more rigidly followed.

Structure, Not Meaning

A grammar will tell you where all the components of an utterance should go—based on the category (e.g., for English: noun, verb, adjective, noun phrase, verb phrase, subject, predicate, adverbial clause, prepositional phrase, etc.) of the word or phrase—of the components, but it won’t tell you what the utterance means. Classic examples are:

- "The cat the dog the rat chased killed ate the malt" — grammatical, incomprehensible

- "Colorless green ideas sleep furiously" (Chomsky) — grammatical, semantically absurd

Constituency and Hierarchy

Technically, the grammar composes utterances into hierarchical structures, even though they tend to be written or spoken as temporal sequences. The tree structure is what allows compositionality to work at scale.

Recursion

Recursion is the property of language that allows rules to be applied repeatedly, embedding structures within structures. This is what enables sentences to be infinitely long and complex, even with a finite set of rules and vocabulary.

Here’s a grammar that demonstrates recursion:

We can generate sentences as in this example:

S NP VP DET NOUN RP VP VP the NOUN RP VP VP the dog RP VP VP the dog that VP VP the dog that SV S VP the dog that thought S VP the dog that thought NP VP VP the dog that thought PN VP VP the dog that thought grace VP VP the dog that thought grace TV NP VP the dog that thought grace hit NP VP the dog that thought grace hit PN VP the dog that thought grace hit alan VP the dog that thought grace hit alan DV NP PP the dog that thought grace hit alan threw NP PP . . . the dog that thought grace hit alan threw the new blue toy to the fast rat

Recursion can allow sentences to become arbitrarily long and complex:

S NP VP NP SV S NP SV NP VP NP SV NP SV S NP SV NP SV NP VP NP SV NP SV NP SV S . . . she dreamed she dreamed she dreamed . . . she dreamed the dog swam

Where Does Grammar Come From?

Chomsky thought language acquisition is too fast and input too impoverished for learning alone, putting forth the Poverty of the Stimulus argument: children know grammatical rules they’ve never seen exemplified. So he proposed a kind of innate Language Acquisition Device (LAD) for what became known as Universal Grammar.

Many opposing views exist. Tomasello and Christiansen note that general learning mechanisms + social interaction are sufficient. The computer simulations of Kirby and colleagues support this view, showing that cultural transmission can lead to the emergence of structured language over generations.

Discuss the fact that LLMs acquire language from stimulus alone, with no innate LAD, and they do remarkably well. LLMs learn grammar implicitly from the data, without explicit instruction. They can generate grammatically correct sentences, but they don’t have an explicit representation of grammatical rules like humans do. How is this possible? Are transformers just big enough to brute-force what evolution gave us for free? Or do we have something else?

More on Acquisition

Perhaps you’ve heard of pidgins (rudimentary contact languages with no native speakers and minimal grammar) or creoles (pidgins that children acquire natively, with a spontaneously developed full grammar).

You should read about how deaf children in 1980s Nicaragua invented a full sign language in one generation. Themselves. With no adults to mimic.

There seems to be a lot of evidence that given the right social conditions, grammar emerges.

Pragmatics

Pragmatics is the study of how context influences meaning, things like prosody, gestures, speaker attributes (age, gender, status), surroundings, relationship with the listener, context, innuendo, subtext, shared situational knowledge, and so on.

An interesting pragmatic concept isimplicature: a meaning that is implied but not explicitly stated. (Enables efficient and sometimes polite communication)

- “If you could pass the guacamole that would be awesome.”

- “Nice store you have here. It would be a shame if anything were to happen to it.”

- “Crack the window.”

- “I was thinking we can take care of this here.” (spoken to a cop at a traffic stop with a $100 bill protruding from behind the license and registration)

Paul Grice proposed the following maxims of implicature for cooperative conversation:

- Quality: Your contribution should be true or something you have evidence for.

- Quantity: Make your contribution as informative as required.

- Relation: Be relevant.

- Manner: Be clear, brief, and orderly.

Efficient discourse is also aided by deixis: words or phrases whose reference (spatial, temporal, or personal) shifts with context, such as I, you, here, now, this, that, these, those, tomorrow, last week, the following.

How LLMs Learn Pragmatics

LLMs trained on text see Grice violations and corrections constantly. They implicitly learn cooperative norms without ever being told the rules — and without ever participating in a conversation before training ends.

Linguistic Diversity

There are ~7,000 languages in the world today, and many more that have gone extinct. They vary widely in their phonology (sound systems), morphology (word formation), syntax (sentence structure), semantics (meaning), and pragmatics (use in context).

A Sampling of Fun Facts

In no particular order, here are just a few fun facts about different languages that highlight this diversity:

- TODO

- TODO

- TODO

- TODO

- TODO

- Pro-drop languages

Naturally, there are thousands more facts that could appear here. Feel free to add some of your favorites.

Language Diversity argues against Universal Grammar

The study of so many languages provides a good deal of evidence against Universal Grammar.

- Apparent Lack of Recursion

- Daniel Everett argues the Pirahã language of Amazonian Brazil lacks recursion (embedding clauses within clauses, which Chomsky thought was universal). Everett argued this wasn’t a cognitive limitation but a cultural one: the Pirahã live in an extreme immediacy of experience culture that doesn’t narrate or discuss anything outside direct experience, so the language reflects that.

- Absence of numbers and counting

- Pirahã and Munduruku, among others, lack exact number words beyond (roughly) one, two, and many. So language reflects cultural practice and need, rather than numerical cognition being universal and hardwired.

- Ergative-absolutive languages

- UG was hypothesized when most linguists were focused on nominative-accusative languages (like Latin, English, Greek), where the subject of a transitive verb ("she kicked him") and intransitive verb ("she ran") are treated grammatically the same. But a huge chunk of the world’s languages, including Basque, many Australian Aboriginal languages, and many Mayan languages, are ergative-absolutive: they group the object of a transitive verb with the subject of an intransitive one instead. Nomintative-accusative is not a universal.

- Topic-prominent languages

- Languages like Mandarin Chinese and Japanese are topic-prominent (sentences has topic-comment structure) rather than subject-prominent (subject-predicate structure) Subject-predicate structure is not universal.

- Time without tense

- The Hopi language of Arizona reportedly doesn’t encode time in the tense-based way that Indo-European languages do; it organizes events more around certainty and manifestation than past/present/future. Tense is not universal.

- Tonal languages

- UG was built with a strong bias toward segmental phonology (consonants and vowels in sequence). Tone languages like Mandarin, Cantonese, Vietnamese, or languages of the Bantu family, pitch is a primary meaning-distinguishing feature at the word level.

Exercise: Research the grammatical features of tones in Yoruba.

- Sign languages

- Sign languages have a spatial grammar: three-dimensional signing spaces simultaneously encode who is doing what to whom. UG would imply that spoken languages would do this too, but they don’t.

The World Atlas of Language Structures (WALS) is pretty cool, with many more examples. It shows that when linguists systematically catalogued features across thousands of languages, feature after feature that had been proposed as universal turned out to be highly variable (word order, case marking, use of morphology, how questions are formed, whether there’s a passive construction, tenses, and so on). The apparent universals mostly dissolved into tendencies or statistical preferences when real cross-linguistic data was gathered at scale.

How Languages Change

TODO - vowel shifts, simplification, grammaticalization, borrowing, word shortening, reanalysis, contact, migration, splitting communities, and other mechanisms of language change.

You can find a bunch of language family tree charts and images on line. Here is one that is pretty nice:

Language and Thought

We can’t talk about language without considering its impact on thought. Because the question of whether and how much language influences thought (and vice versa) is complex and the subject of a lot of debate.

Linguistic Relativity

Linguistic relativity asserts that language influences worldview or cognition. You’ll sometimes hear it called “Sapir-Whorf hypothesis” of which there are two main versions:

Strong: Linguistic Determinism

Language determines thought — we cannot think what we cannot say. Now largely discredited.

Weak: Linguistic Relativity

Language influences habitual thought. Now supported empirically. Classic example: languages with different color terms lead to faster discrimination at term boundaries.

The Wikipedia page on linguistic relativity provides a good overview.

There are a few interesting examples of how language can (or may) shape thought:

Spatial Language (Levinson)

English and other languages are egocentric: the terms left, right, front, back are relative to speaker. But in Guugu Yimithirr direction is absolute: things are north, south, east, west, always, even indoors, even in the dark.

Number and Language

Pirahã may not have exact numbers beyond one, two, few, many. But they get along fine without number words.

Inner Speech

We often think in language, but not always. Visual-spatial reasoning, musical thought, mathematical intuition are not obviously linguistic. Language may be a formatting layer that makes thoughts communicable and memorable, not the medium of thought itself.

Immediacy of Experience

The Pirahã, and their immediacy of experience, again:

Signs and Symbols

Charles Sanders Peirce identified three types of signs:

- Icons

- Resemble what they represent — a photo, a map, onomatopoeia (buzz)

- Indices

- Causally connected to their referent — smoke → fire; pointing

- Symbols

- Arbitrary, conventional — most words; red = stop

Human language is overwhelmingly symbolic. This is extraordinary! No other species uses symbols at scale. And we’ve been doing it for a long time:

- Ochre engravings at Blombos Cave, South Africa: ~75,000 BCE — geometric patterns, possibly symbolic

- Shell beads used as ornaments: ~100,000 BCE — symbols of identity, group membership

- Cave paintings (Lascaux, Chauvet): ~30,000–17,000 BCE — iconic + possibly symbolic

Symbols are fascinating because they have no intrinsic connection to their referent. They require (a) shared convention, (b) memory, (c) the ability to hold “word” and “meaning” as linked but separate. The power is unlimited generativity — you can make a symbol for anything, even abstractions: justice, infinity, recursion, love, LLM, the meaning of life, etc.

But where do symbols come from? How does a word hook onto the world? Philosophers call this the symbol grounding problem. Harnad (1990) says symbols in a closed formal system only refer to other symbols—they’re not grounded. Note that a dictionary defines words with more words. There is no base word. Humans ground symbols through perception and action

Joint Attention

Before you can teach a word, both parties must be attending to the same thing. Infants develop a (proto-linguistic) joint attention at ~9 months. Without joint attention, ostensive definition (“that’s a dog”) doesn’t work.

Pointing is surprisingly rare in non-human primates.

Connections to Formal Languages and LLMs

The modern view is that language didn’t evolve for communication alone. It co-evolved with social complexity, material culture, symbolic thought, and music. Mithen advocated the Hmmmmm hypothesis, which posits that it was preceded by “proto-language” that was Holistic, manipulative, multi-modal, musical, and mimetic.

Human language is not just words and grammar. It requires signs, symbols, social cognition, and the ability to manipulate and understand these elements in complex ways.

Formal languages are just words and rules. We covered them earlier.

LLMs feel like something more:

- They are trained on the output of this evolutionary system (the languages we have today)

- They learn statistical regularities of symbols, grammar, and various patterns from data alone, hinting that language is not innate, but learned

- They lack embodiment, grounding, joint attention, continuous world experience, but it is pretty amazing how far you can get without those things

The philosophical questions are interesting. Two key questions are:

- Do LLMs truly understand language, or are they merely manipulating symbols without grounding?

- Words (technically tokens) live in a vector space. Is their vector actually the meaning?

Recall Practice

Here are some questions useful for your spaced repetition learning. Many of the answers are not found on this page. Some will have popped up in lecture. Others will require you to do your own research.

- What are three views of language? Language as mathematics (formal language), Language as an evolutionary communication system (human language), Language as statistical patterns (LLMs)

- What is Mithen’s central claim about the nature of human language?

Language is not one thing — it is a puzzle made of many pieces (words, grammar, symbols, social cognition, vocal anatomy) that evolution assembled, imperfectly, over millions of years.

- What is the difference between open-class and closed-class words? Give examples of each.

Open-class words (nouns, verbs, adjectives) accept new members freely — "selfie," "to google." Closed-class words (prepositions, conjunctions, determiners) form a small, stable set and are the structural glue of syntax.

- What is fast mapping?

Fast mapping is the ability of children to infer a word’s meaning from a single exposure in context. Children acquire roughly 10 new words per day between ages 2 and 8 this way.

- What is prototype theory (Rosch), and what does it predict about category membership?

Categories are organized around best examples (prototypes) rather than necessary and sufficient conditions. Membership is graded — a robin is a better bird than a penguin. Category boundaries are fuzzy.

- Distinguish polysemy from homonymy, and give an example of each.

Polysemy: one word with multiple related meanings (bank: riverbank / financial institution). Homonymy: one word with unrelated meanings (bat: animal / baseball bat).

- What are Grice’s four maxims of cooperative conversation?

Quantity (say enough, not too much), Quality (be truthful), Relation (be relevant), Manner (be clear).

- What is deixis? Give three examples.

Deixis refers to words whose reference shifts with context. Examples: I, here, now.

- What is Chomsky’s poverty of the stimulus argument?

Children acquire grammatical rules they have never seen exemplified in their input, which suggests that at least some linguistic knowledge is innate (the Language Acquisition Device). The stimulus is too impoverished for pure learning to account for the result.

- What does the emergence of Nicaraguan Sign Language demonstrate?

That grammar can emerge spontaneously from social conditions alone — deaf children in 1980s Nicaragua, without an adult sign language model, invented a full grammatical sign language within one generation.

- What is the difference between the strong and weak versions of the Sapir-Whorf hypothesis? Which is supported empirically?

Strong (linguistic determinism): language determines thought — we cannot think what we cannot say. Now largely discredited. Weak (linguistic relativity): language influences habitual thought. This is supported empirically, e.g., color term differences affecting discrimination speed.

- What is Byte-Pair Encoding (BPE), and why is it described as a compromise rather than a solution?

BPE is a tokenization algorithm that iteratively merges the most frequent character pairs to build a vocabulary. It is a compromise because it does not correspond to linguistically meaningful units — it is not segmenting into "words" in any phonological, morphological, or semantic sense.

- What does it mean to say that word vectors in embedding spaces encode lexical relations? Give an example.

The geometric structure of vector spaces reflects semantic relationships. The canonical example is

king − man + woman ≈ queen, showing that analogical relationships are encoded as vector offsets. - What other phenomena did language co-evolve with?

Social complexity, material culture, symbolic thought, and music — specifically the "Hmmmmm" hypothesis: a holistic, manipulative, multi-modal, musical, mimetic proto-language that preceded modern language.

- What is Peirce’s distinction between icons, indices, and symbols? Give an example of each.

Icons resemble their referent (a photo, a map). Indices are causally connected to their referent (smoke → fire). Symbols are arbitrary and conventional (the word "dog"; red meaning stop).

- What is the symbol grounding problem, and why does it matter for LLMs?

Symbols in a closed formal system only refer to other symbols — they are not grounded in perception or experience. LLMs manipulate symbols without perceptual grounding, raising the question of whether their "understanding" is genuine.

- What is joint attention, and why is it a prerequisite for language?

Joint attention is the ability of two individuals to attend to the same thing simultaneously. Without it, ostensive definition ("that’s a dog") cannot work. Infants develop it at ~9 months; it is surprisingly rare in non-human primates.

Summary

We’ve covered:

- Three views of language

- Language Evolution

- Words

- Grammar

- Signs and symbols

- Human language connections to LLMs