Semantics

Syntax vs. Semantics

The semantics of a language assigns a meaning to each utterance (legal program) of the language. But where does this meaning come from? How it is assigned? It appears an inescapable fact that semantics is driven by the underlying structure of the utterances, not to each individual character. It is the arrangement of the symbols (characters) that gives a program its particular meaning. The arrangement, i.e., the syntax. Generally, semantics is given by mapping syntactic structures to their meaning.

In this introduction, we’ll be looking at ways to assign meaning, using the language Astro, which we saw previously in our notes on Syntax. Semantics is almost always defined from the abstract syntax of a language. So as a refresher, here’s the abstract syntax of the Astro:

$ \begin{array}{llcl} n: & \mathsf{Nml} & & \\ i: & \mathsf{Ide} & & \\ e: & \mathsf{Exp} & = & n \mid i \mid -e \mid e+e \mid e-e \mid e\,\mathtt{*}\,e \mid e\,/\,e \mid e\,\mathtt{\%}\,e \mid e\,\mathtt{**}\,e \mid i\;e^*\\ s: & \mathsf{Stm} & = & i = e \mid \mathtt{print}\;e\\ p: & \mathsf{Pro} & = & \mathtt{program}\;s^+ \end{array}$

Semantic definitions will assign meanings to each of these abstract forms.

Why Study Semantics?

Surely there are benefits to having a way to precisely, unambiguously know the meaning of every program you write. Why would you use a programming language for which no one knew what any of the programs did?

What’s New?

There aren’t many books about semantics from the 21st century. It feels like all the methods were made 50 years ago. Not unlike how syntax feels like a solved problem. But surely some things have changed? So I asked ChatGPT in mid-2024:

Have there been any advances in the field of programming language semantics (denotational, operational, etc.) in the last 20 years?.

Over the last 20 years, the field of programming language semantics, which includes denotational, operational, and axiomatic semantics, has seen numerous advances. Here are some key developments and trends:

- Domain-Specific Languages (DSLs): The rise of DSLs has prompted advances in the semantics of specialized languages, tailored for specific domains such as data analysis, web development, and scientific computing. Semantic techniques have been adapted to better support these languages.

- Probabilistic and Quantum Computing: Advances in probabilistic programming languages have led to new semantic frameworks to handle uncertainty and randomness, with applications in machine learning and AI. The semantics of quantum programming languages have been developed to support quantum computing paradigms, incorporating quantum mechanics principles into programming language theory.

- Concurrency and Parallelism: With the increase in multicore and distributed computing, new semantic models have been developed to better understand and reason about concurrent and parallel programs. Techniques such as compositional semantics and process calculi (e.g., the π-calculus) have been refined and extended.

- Type Theory and Dependent Types: Advances in type theory, especially the development of dependent type systems, have influenced the semantics of programming languages. Languages like Coq and Agda, which use dependent types, have rigorous semantic foundations that enable formal verification of program properties.

- Mechanized Semantics and Proof Assistants: The use of proof assistants (e.g., Coq, Isabelle, HOL) has grown, allowing for the formalization and mechanization of programming language semantics. These tools help in proving properties about languages and verifying the correctness of language implementations.

- Game Semantics: Game semantics has provided new insights into the interactive behavior of programs, especially in the context of programming languages with complex control structures and higher-order functions.

- Abstract Interpretation: Advances in abstract interpretation have provided powerful techniques for static analysis, helping to infer properties about programs and ensuring their correctness and safety. This has influenced both operational and denotational semantics.

- Software Verification and Model Checking: The integration of semantic techniques with software verification and model checking has been a significant trend. Techniques from operational semantics are often used to create models for verification tools.

- Language Interoperability: With the increasing need for interoperability between different programming languages, semantic frameworks have been developed to facilitate the translation and integration of programs written in different languages.

- Semantics of Scripting Languages: As scripting languages like JavaScript and Python have become more prevalent, there have been efforts to provide formal semantics for these languages to better understand their behavior and guide their development.

Okay then!

How do we “do” Semantics?

Meanings can be assigned to programs either formally or informally. Informal semantics are usually given in natural language, while formal semantics are given in a mathematical language. Formal semantics is the only way to be precise and unambiguous. But it’s also...hard. We’ll start with informal semantics, then move to formal semantics.

There’s a third way, actually. We can write an interpreter, and decree: “the meaning of a program in this language simply is the output of the interpreter.“

Seriously? The Third Way?It sounds silly, but it has one advantage: the so-called “compiler correctness problem“ and the so-called “interpreter correctness problem” aren’t problems anymore—the interpreter is correct by definition.

Informal Semantic Descriptions

The traditional way to assign meanings to programs is with natural language. These descriptions, to be fair, try to be super precise. Let’s see an example. We’ll attempt an informal semantics of the language Astro. We’ll be systematic, at least, covering each piece of the abstract syntax definition:

- A program executes its statements in order, generating a list of output values.

- Before the program begins, the following identifiers are bound like so:

- The identifier

πis bound to the number $\pi$. - The identifier

sqrtis bound to the function that returns the square root of its sole argument, which must evaluate to a number. - The identifier

sinis bound to to the function that returns the sine of its sole argument, which must evaluate to a number - The identifier

cosis bound to to the function that returns the cosine of its sole argument, which must evaluate to a number - The identifier

hypotis bound to to the function of two numeric arguments, $x$ and $y$ that returns $\sqrt{x^2+y^2}$

- The identifier

- The identifier

πis a read-only identifier. All other identifiers are not read-only; we call them writable. - An assignment statement evaluates its right hand side, an expression, then binds the identifier on its left hand side to this new numeric value; however the identifier must not have been previously assigned to, or if it had been, the identifier must be writable and bound to a number (not a function).

- A print statement evaluates its argument, then writes it to standard output.

- The unary and binary operator expressions evaluate their operands then do the obvious corresponding mathematical operation.

- A call expression evaluates its arguments, then calls the function bound to the identifier with these argument values. If the identifier was unbound or bound to a number (instead of a function), that is an error.

- An expression that is an identifier produces the value bound to that identifier, which must have been previously bound to a number (not a function).

Well that that was long-winded and who knows if we even caught everything?

In fact, the informal semantics is still imprecise! To get started, what does it be to be “bound”? What is “previously”? Let’s try to find more problems. There are quite a few!

And can you imagine doing this for a real language? It would take hundreds and hundreds of pages? Right? Right.

Okay on to formal semantics. But what does such a thing even look like? How do we formalize meaning?

Approaches to Formal Semantics

Let’s think of some ways we could capture the meaning of a program formally. Some things come to mind:

- We can show the effects of executing each construct on an abstract machine. (Operational)

- We can define functions that assign meanings in the form of mathematical objects to each construct. (Denotational)

- We can provide a formal logic allowing us to infer execution properties of programs via formal proof. (Axiomatic)

We can also mix elements of various approaches.

An Operational Approach

Let’s try to build a semantics for Astro, giving a set of rules which may be seen as simulating the execution of a program on some kind of abstract “machine.” For Astro, programs evaluate to the list of numbers they output. For example, the program:

x = 5;

print(sqrt(5 * 13 + 35));

print(3);

x = x + 21;

print(x / 8);

“evaluates to” the sequence $[10, 3, 3.25]$. So we want to define the relation $\Downarrow_{\small P}$ such that:

$p \Downarrow_{\small P} o$

means that program $p$ produces output $o$, where $o \in \mathsf{Float64}^*$. Since we are defining a programming language semantics, we’ll not use $\mathbb{R}$ but instead introduce $\mathsf{Float64}$ as the set of all members of IEEE binary64 and all the operations on these values to be as defined by that standard (including the workings of $\infty$ and $\mathsf{NaN}$).

The evaluation relation for programs must be defined in terms of its constituent statements and its constituent expressions. But hmmm, we know that we want 3+2 to evaluate to $5$, but how do we evaluate x+3? It depends on what x is bound to, hence expressions must be evaluated in a context that contains a mapping from identifiers to the entities they are bound to. This mapping is called a memory (in the US, or a store in the UK). But what are the identities actually bound to, if anything? Let’s define a type for this:

$$

\frac{}{\mathsf{Undef}\!: \mathsf{Value}}

\quad

\frac{x\!:\mathsf{Real}\;\;\;b\!:\mathsf{Bool}}{\mathsf{Num}\;x\;b\!:\mathsf{Value}}

\quad

\frac{f\!:\mathsf{Real^* \rightarrow Real}\;\;\;n\!:\mathsf{Nat}}{\mathsf{Fun}\;f\;n\!:\mathsf{Value}}

$$

This says an identifer is either undefined, a numeric variable together with its mutability status (true for mutable, false for immutable), or a function together with its arity.

Evaluating expressions, then, will require a relation:

$e,m \Downarrow_{\small E} x$

meaning that in the context of memory $m$, expression $e$ evaluates to $x$. Statements can both read and write memory, and they can append to the output. So:

$s,m,o \Downarrow_{\small S} (m', o')$

means that, if the context of memory $m$ with the output is currently $o$, then the execution of $s$ produces a new memory $m'$ and new output $o'$. Now we can define the operational semantics of Astro as follows (for brevity, dropping the subscripts on the evaluation relations since they can be inferred from context):

where $o_0$, the initial output, is defined to be the empty sequence, and the initial memory $m_0$ is our “standard library” defined as follows:

$\begin{array}{l} m_0 = (\lambda\,i.\;\mathsf{Undef}) [ \\ \quad \mathtt{π} \mapsto \mathsf{Num}\;\pi\;\mathsf{false}][ \\ \quad \mathtt{sqrt} \mapsto \mathsf{Fun}\;(\lambda x.\sqrt{x})\;1][ \\ \quad \mathtt{sin} \mapsto \mathsf{Fun}\;(\lambda x.\sin{x})\;1][ \\ \quad \mathtt{cos} \mapsto \mathsf{Fun}\;(\lambda x.\cos{x})\;1][ \\ \quad \mathtt{hypot} \mapsto \mathsf{Fun}\;(\lambda (x,y).\sqrt{x^2+y^2})\;2] \\ \end{array}$

To tighten up the definition a little, we should formalize the notion of memory and specify the types of the relations:

$\begin{array}{l} \textsf{Mem} = \textsf{Ide} \to \textsf{Value} \\ \textsf{Output} = \textsf{Float64}^* \\ \\ \Downarrow_{\small P} \;\subseteq \mathsf{Pro} \times \mathsf{Output} \\ \Downarrow_{\small S} \;\subseteq (\mathsf{Stm} \times \mathsf{Mem} \times \mathsf{Output}) \times (\mathsf{Mem} \times \mathsf{Output}) \\ \Downarrow_{\small E} \;\subseteq (\mathsf{Exp} \times \mathsf{Mem}) \times \mathsf{Float64} \end{array}$

More about operational semantics

We’ve only given a very brief introduction. There’s so much more. There are many styles of operational semantics, and for the style we’ve presented here, you’ll find both “big-step” and “small-step” flavors. Much more to come.

A Denotational Approach

Let’s try something else. It will look easier but it can only be justified with some really intense mathematics that we will not go into right now. For each abstract syntax form, we’ll give a partial function mapping the form to its meaning, a mathematical object known as a denotation:

This definitely looks simpler, but it all hinges on the idea that $\bot$ is much more than just the thing that stands in for values that something in a partial function does not map to. In a denotational semantics, $\bot$ needs to be treated as an element that can be used as an input to an operation, something we haven’t seen yet! Any function application that has $\bot$ as an argument will automatically return $\bot$. We need to go into some pretty deep and gnarly and difficult mathematics to actually justify it. More on this later in the course. It will be crazy. And oh, wait until you see what we need to do to justify recursive definitions. Get your inner mathematician ready.

We left a LOT out.

There is a great deal more to denotational semantics that what is shown here, but this gets us started. Entire books have been written on denotational semantics alone. There are many mathematical difficulties involved that need to be handled, particularly surrounding recursion. There is so much to cover.

It can be tough going mathematically, which is probably why operational semantics is a bit more popular.

Other Approaches

Another major approach is the axiomatic approach, which is a bit more focused on proofs of program correctness.

Methodologies

Here are some methodologies that have evolved in the world of formal semantics:

| Approach | Method | Ideas |

|---|---|---|

| Operational | Concrete Operational Semantics | Define an actual abstract machine to execute the program. |

| Structural Operational Semantics (Small-step) | A set of inference rules that show when each individual step of a computation can take place and what its effect is. | |

| Natural Semantics (Big-step) | A set of inference rules that show the effect of executing whole constructs without regard to intermediate computational states. | |

| Denotational | Direct Denotational Semantics | Define the meaning of each syntactic construct as a mathematical object in terms of its constituent constructs. |

| Continuation Semantics | Define the meaning of each syntactic construct, compositionally, as a mathematical object, where meanings are generally transformations modeling the “remainder of the program” (to better handle errors and disruptive control transfers). | |

| Axiomatic | Hoare Logic | A kind of axiomatic semantics (where the meaning is inferred from defining a set of invariant properties for each construct) focusing on proving the correctness of programs. |

| Algebraic Semantics | Define the semantics via an algebra. | |

| Hybrid | Action Semantics | Define meaning in a way that is more familiar to programmers, with actions, data, and yielders. |

Mathematics of Meaning

In the sneak peeks above you’ve just been given a taste of what formal semantic definitions look like.

How can we be sure they are truly formal, or truly unambiguous? What kind of mathematical objects work to covey the meaning of programs in a programming language? If your approach to semantics is super algebraic, you might want to check out Category Theory. But mostly, it’s the Lambda Calculus that everyone learns and knows and uses mostly.

We’ll study the Lambda Calculus pretty soon.

Resources

Where are some places to learn about semantics? Wikipedia is a good place to start:

There are a few books that cover formal semantics:

- Glynn Winskel, The Formal Semantics of Programming Languages, 1993.

- Hanne Riis Nielson and Flemming Nielson, Semantics with Applications, 1999.

- Kenneth Slonneger and Barry L. Kurtz, Formal Syntax and Semantics of Programming Languages, 1995.

- Matthew Hennessy, The Semantics of Programming Languages, Wiley, 1990.

- David Schmidt, Denotational Semantics, 1986.

- Carl A. Gunter, Semantics of Programming Languages, MIT Press, 1992.

- Michael J. C. Gordon, The Denotational Description of Programming Languages, Springer, 1979.

Also, I have course notes coming up for Operational, Denotational, and Axiomatic semantics. We’ll get to them soon.

Context, Again

Let’s revisit context once again.

What would you say about this Astro program?

print(x);

The grammar permits it, but the contextual constraint requiring all variables to have been previously assigned is violated. In fact,

- The operational semantics above never produces an output for this program (it “crashes”)

- The denotational semantics above produces $\bot$ for this program

In other words, the program is illegal, or perhaps, it’s not even an Astro program. The same goes for similar violations in many popular programming languages, such as:

- Use of undeclared identifiers

- Redeclaration of identifiers within a scope (unless overloading permitted)

- Use of an identifier where it may not have been initialized (only in some languages)

- Assignment to a read-only variable

- Modification of an immutable object

- Using an entity improperly (violating access controls, configuration attributes, etc.)

- Failure to call a private method

- Failure to use a local variable

- Match or switch not exhaustive

- Function in which all paths do not have an explicit return

- Wrong number of arguments passed to a function

- Passing r-values to reference parameters

But are these syntactically illegal or semantically illegal?

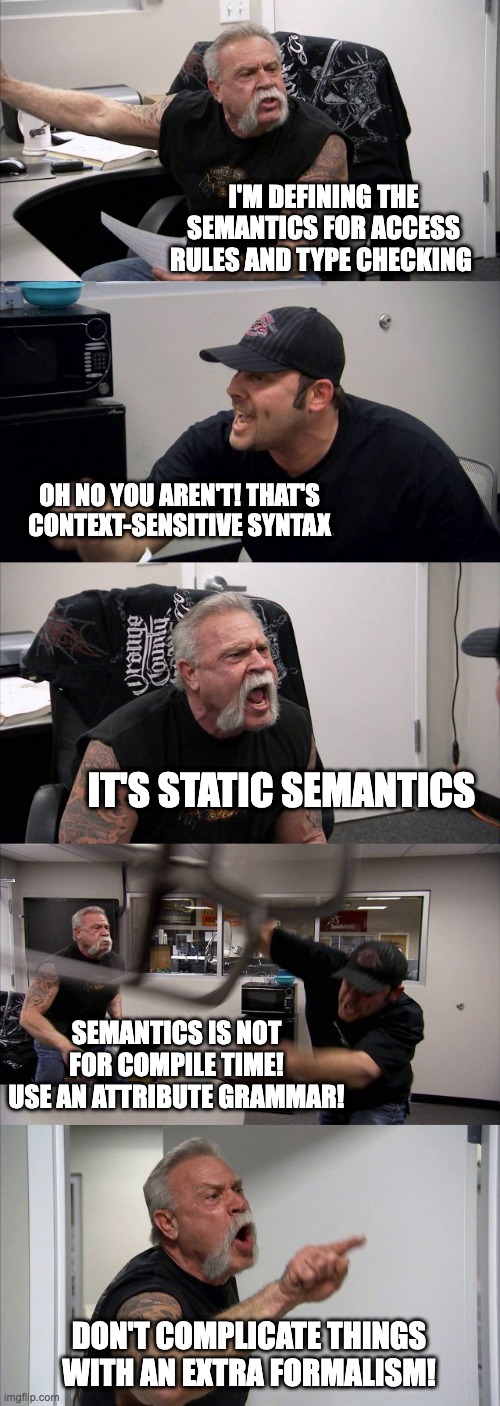

We did, after all, allow this program in our syntax, and wrote semantic rules to ensure the program was erroneous. But, you know, we certainly could have detected this error without ever having run the program! In some sense, it wouldn’t be that wrong to say it’s an syntactically ill-formed program. Right? Some people actually argue pretty strongly about the terminology:

Use CFG/EBNF/PEG

Use CFG/EBNF/PEG

Use attribute grammars or two-level grammars

Use semantic rules

Use semantic rules

Use semantic rules

In the semantics definitions for Astro above, we handled all the contextual rules in the semantics. In fact we completely conflated the static and dynamic semantics, mashing them both together in a single set of rules. In practice, you might see two sets of rules, one that just checks the contextual constraints, and the second defining the run-time behavior, under the assumption that the constraints have already been checked.

We’ve seen the compile-time vs. run-time distinction before:

Statics

Things we can know about a program before it is run, simply by inspecting the source code.

Dynamics

Things we only know about a program while it is running, such as certain effects on the environment, which may be driven by external inputs unknown before execution.

Perhaps the proper way to frame this “debate” is to look at the syntax vs. semantics question as orthogonal to the statics vs. dynamics question:

| Syntax (Structure) | Semantics (Meaning) | |

|---|---|---|

| Statics (before execution) | Dynamics (during execution) | |

| What are the structural entities (e.g., declarations, expressions, statements, modules) and how do they fit together, perhaps with punctuation? | What are the non-structural rules that define a legal program (e.g., type checks, argument-parameter matching rules, visibility rules, etc.)? | What does a program do? What effects do each of the forms of a well-structured, legal program have on the run-time environment? |

Though statics and dynamics are very general terms, most beginners hear them first in the context of type systems. You’ll hear folks speak of a statically-typed language as one in which type checking is done prior to program execution and a dynamically typed language as one in which type checking is done during program execution. In reality, most languages do a little of both, but one or the other usually predominates.

Semantics in Practice

Formal semantics, and formal methods in general (e.g., proofs of correctness) seem to be underused in practice, except in certain life-critical domains. But the benefit of studying formal semantics leads to much more rigorous approaches to software correctness and security, a very good thing indeed.

Applying semantic rules at compile-time is part of what’s known as static analysis. During static analysis we look for outright errors, of course, but we also look for suspect practices that have the potential for errors or security vulnerabilities. This latter part of static analysis is necessarily outside the code of formal semantics, but it is an extremely important pragmatic issue that requires the developer’s full attention. To learn more about this area of static analysis, you can see my notes on the topics in the context of compiler construction, or even better, to get a sense of the massive scale of static semantic rules in languages, browse the following:

- List of (potential) Java bugs detectable by FindBugs™

- List of (potential) Java bugs detectable by PMD

- List of (potential) JavaScript bugs detectable by PMD

- The rules of ESLint

Recall Practice

Here are some questions useful for your spaced repetition learning. Many of the answers are not found on this page. Some will have popped up in lecture. Others will require you to do your own research.

- What is the difference between syntax and semantics? Syntax is the structure; semantics is meaning.

- What is generally used as the “input” to a semantic definition? The abstract syntax.

- What are three approaches to defining a semantics? (1) Informally, with natural language, (2) formally, with mathematical language, and (3) by writing an interpreter and decreeing that the meaning of a program is the output of the interpreter when run on that program.

- How is semantics typically defined? Semantics is typically defined informally, using natural language.

- Why do most language definitions provide a formal syntax but not a formal semantics? Syntax is normally context free and very easy to mathematically formalize; semantic definitions often feature quite a few ad-hoc constraints and lots of contextual information which is more clunky to formalize.

- When a formal semantics is defined, to what are meanings assigned? Meanings are assigned to each construct in the abstract syntax.

- What are the three most popular approaches to defining a formal semantics? Denotational, operational, and axiomatic.

- What are denotational semantics, operational semantics, and axiomatic semantics? Denotational semantics assigns mathematical objects to each construct; operational semantics simulates the execution of a program on an abstract machine; axiomatic semantics defines the meaning of each construct in terms of a formal logic.

- In an operational semantics, relations, not functions, are typically used. Why? Relations make it easy to model the behavior of illegal programs: we simply don’t define anything at all for a program in an illegal state to do.

- In an operational semantics, how do we typically model states for expressions? For statements? Expressions are modeled as a pair of an expression and a memory; statements are modeled as a triple of a statement, a memory, and I/O streams.

- What does the rule for evaluating a negation expression look like in an operational semantics? $\dfrac{e,m \Downarrow x}{[\![-e]\!],m \Downarrow -x}$

- What does the rule for evaluating a negation operator expression look like in an denotational semantics? $\mathscr{E}[\![-e]\!]\,m = -\mathscr{E}e\,m$

- How are errors modeled in a typical denotational semantics? Errors are typically modeled as a special value, $\bot$.

- Why is denotational semantics hard? The underlying mathematics required to justify techniques like recursion require some pretty arcane knowledge.

- What are some branches of mathematics used in denotational semantics? Category theory and lambda calculus are two big ones.

- What is the debate between what to call the language definition rules that cannot be captured in a context-free formalism? Some people call it context-sensitive syntax and others call it static semantics.

- What is the difference between static semantics and dynamic semantics? Static semantics deals with meaning we can infer and check just by looking at the program itself (i.e., before the program is run, or “at compile time”); Dynamic semantics deals with the meaning of the program in terms of its effects at run time.

- What do static analyzers do? Static analyzers look for errors and suspect practices in code that could lead to errors or security vulnerabilities.

- What are two popular semantic analyzers for Java? FindBugs and PMD

Summary

We’ve covered:

- Where semantics comes from

- The drawbacks of an informal, even if precise, semantic specification

- Broad approaches to formal semantics

- Where to learn about formal semantics

- The problem of context

- Semantics in practice